I.A. Conversacional entrou no chat e está aqui para redefinir as conversas dos clientes. Graças às suas capacidades avançadas, as conversas automatizadas que costumavam ser baseadas em regras e escritas tornaram-se dinâmicas e intuitivas. Mas o que significa isto para as empresas?

Este guia te guiará por tudo o que você precisa saber sobre a IA conversacional para conversas com clientes. Você aprenderá o que é, como funciona e quais são suas diferenças em relação aos chatbots convencionais. Em seguida, vamos explorar como ele está redefinindo as conversas com os clientes, formas de implementá-lo e as melhores práticas para usá-lo de forma eficaz.

O que é a IA Conversacional?

Inteligência artificial conversacional (II) refere-se ao uso de tecnologias de IAI para simular conversas semelhantes a seres humanos. Utiliza grandes volumes de dados e uma combinação de tecnologias para compreender e responder à língua humana de forma inteligente.

A melhor parte é que a IA aprende e reforça as suas respostas de cada interacção, muito à semelhança de um ser humano. Alguns exemplos de inteligência artificial conversacional rudimentar que você pode conhecer são chatbots e agentes virtuais.

Antes de explorar como essa tecnologia evoluiu, vejamos como a IA conversacional avançada funciona.

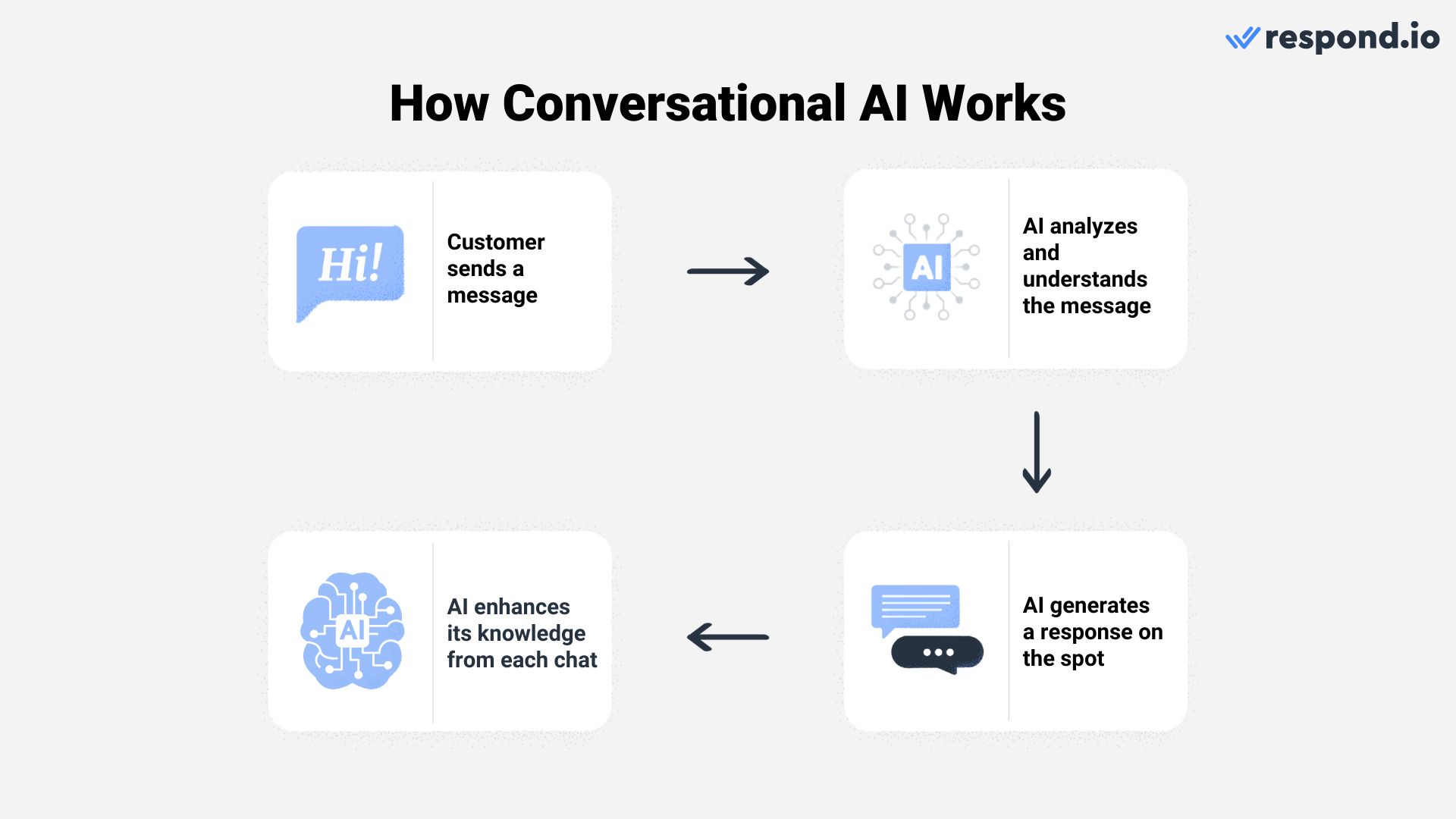

Como funciona a IA Conversacional?

A IA de conversas combina tecnologias como processamento de linguagem natural (NLP) e aprendizado de máquina (ML) para ajudar sistemas de software a imitarem as interações humanas.

O NLP equipara esses sistemas com a habilidade de entender, interpretar e gerar linguagem humana. Traduz as nuances das conversas humanas em uma língua que o software consegue entender, permitindo-lhe interagir com os seres humanos de forma mais natural.

Enquanto isso, ML capacita esses sistemas a aprender e melhorar a partir de dados e experiências. Ele analisa os padrões de conversa e usa estas dicas para fazer previsões e decisões informadas. À medida que esses sistemas processam e analisam mais dados, a sua capacidade de fazer previsões precisas aumenta ao longo do tempo.

Essas duas tecnologias se alimentam mutuamente em um ciclo contínuo, melhorando constantemente os algoritmos da IA. Aqui está um exemplo de como funciona para as conversas com clientes.

Vamos supor que um cliente pergunte sobre seu produto no WhatsApp. Primeiro, a IA lê a mensagem, analisa a mensagem e entende o que o cliente realmente está procurando.

Em seguida, apresenta uma resposta baseada na sua compreensão. Não é apenas despejando respostas pré-escritas; está criando respostas na hora. Ao interagir com os clientes, ela aprende com suas respostas para aumentar sua precisão ao longo do tempo.

Agora que você sabe como funciona, nós responderemos a uma pergunta popular: O que torna a IA conversativa diferente dos chatbots convencionais?

Chatbot Convencional vs AI Conversacional

Em termos simples, as tecnologias de IC de hoje são uma evolução significativa dos chatbots convencionais.

Chatbots tradicionais operam com base em regras e scripts pré-definidos, então suas respostas estão limitadas a uma faixa estreita de entradas. Podem lidar facilmente com questões simples e previsíveis, mas podem ter problemas com pedidos complexos ou inesperados.

I.A. Conversional, empregando tecnologias avançadas, como ML e NLP, gera respostas dinamicamente com base na entrada do usuário, em vez de ser restrito a um script definido. Ele retira respostas da AI'e extensa base de conhecimento para lidar com uma gama mais ampla de tópicos e se adaptar a perguntas ambíguas ou contextuais.

Chatbot Convencional vs AI Conversacional

Funcionalidade | Chatbot Convencional | IA de conversas |

|---|---|---|

Funcionalidade | Capaz de responder a perguntas básicas | Capaz de abordar questões mais complexas |

Mecanismo de resposta | Só responde a palavras-chave exatas | Gera respostas baseadas na entrada do usuário |

Adaptabilidade | Incapaz de escapar de caminhos de chat predeterminados | Adapta a consultas inesperadas usando aprendizado de máquina (ML) |

Base de Conhecimento | Encontra-se em uma base de dados de perguntas e respostas predefinidas | Usa o amplo conhecimento de IA |

Além disso, os sistemas de IC são mais adeptos do reconhecimento e da adaptação a várias nuances linguísticas, tais como lazer, idiomas ou dialetos regionais. Isto dá-lhe a capacidade de ter mais interações parecidas com as humanas.

Em resumo, enquanto chatbots convencionais são baseados em regras e no escopo limitado, Sistemas de IA conversacionais oferecem uma abordagem mais flexível e adaptável, proporcionando uma experiência conversacional semelhante à interação humana.

Então, as empresas precisam de uma IA conversacional para conversas com clientes? Exploraremos a resposta na próxima seção.

Transforme conversas de clientes em crescimento de negócios com respond.io. ✨

Gerencie chamadas, chats e e-mails em um só lugar!

Por que a IA conversacional está revolucionando as conversas com clientes

Antes de entrar nos benefícios da IA conversacional, você deve primeiro entender seu significado nas comunicações com os clientes. Hoje, as conversas com clientes podem ocorrer em vários canais, incluindo chamadas telefônicas, e-mails e aplicativos de mensagens.

Com a crescente preferência por aplicativos de mensagens em relação a e-mails ou chamadas, esses canais apresentaram oportunidades para as empresas se envolverem, encantarem e converterem. De fato, um estudo revela que 75% dos clientes realizam uma compra após conversarem em um aplicativo de mensagens, destacando sua eficácia como ferramenta de conversão.

“Enquanto os canais de mensagens oferecem inúmeras oportunidades, as empresas muitas vezes hesitam em usá-los como parte de sua estratégia de clientes. Isso acontece porque manipular altos volumes de conversas pode ser desafiador e eles não querem sacrificar a qualidade do serviço.

É aqui que a IA conversacional agrega valor fornecendo prompt, respostas de alta qualidade a uma escala muito maior e a um custo menor", disse Gerardo Salandra, o co-fundador e CEO de resposta. o presidente da The Artificial Intelligence Society of Hong Kong.

Com essa compreensão, vamos explorar em mais detalhes como a IA conversacional pode beneficiar substancialmente seu negócio.

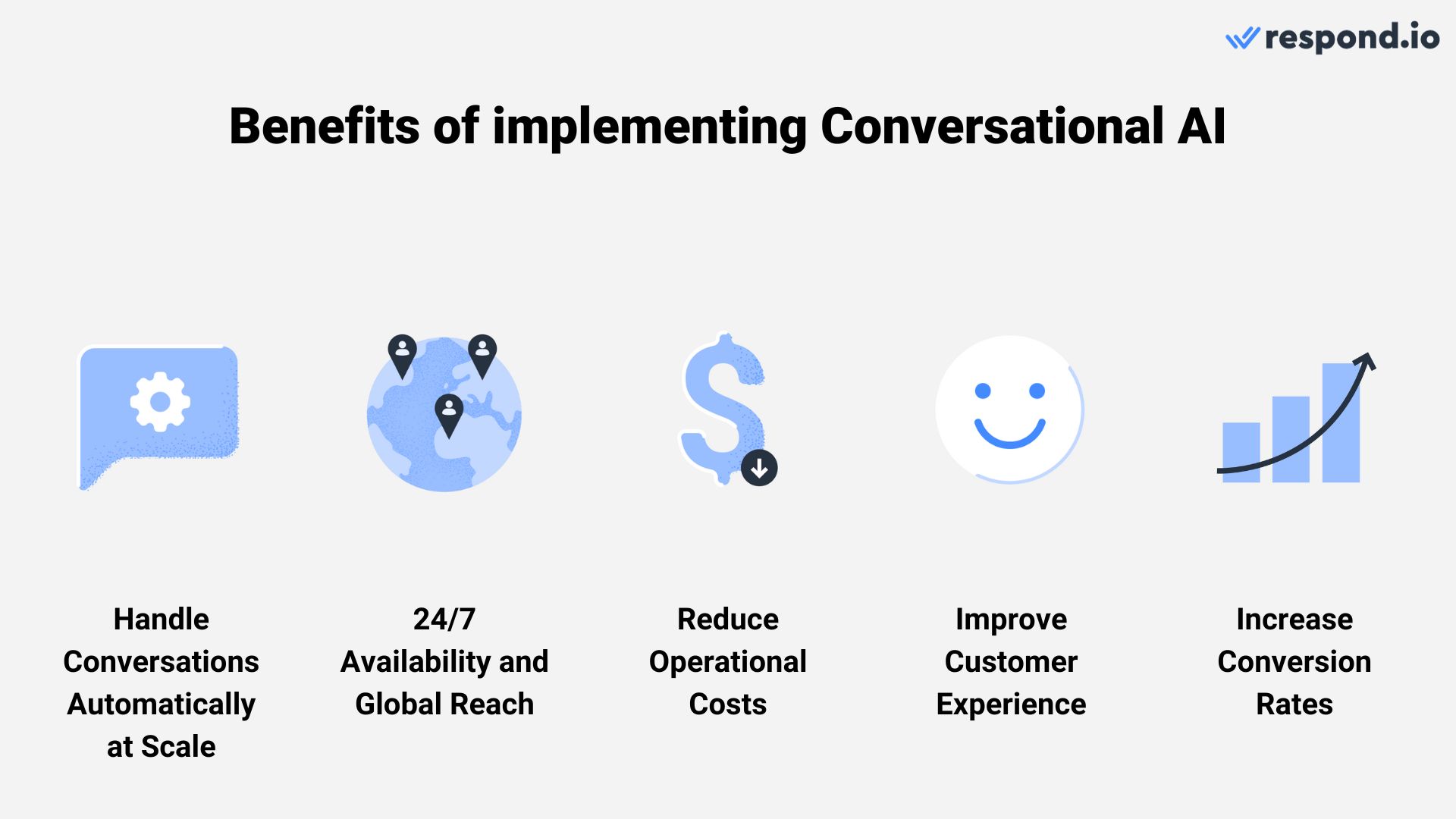

Benefícios da IA conversacional

Gartner prevê que, até 2026, uma em cada 10 interações com agentes será automatizada e as implementações de IA conversacional em centros de contato reduzirão os custos de mão de obra dos agentes em $80 bilhões.

Imagine uma equipe de 10 agentes dedicados a fornecer respostas de alta qualidade e limitados a manusear um punhado de conversas simultaneamente. Implementar IA conversacional transforma drasticamente esse cenário.

Ao contrário de agentes humanos, a IA conversacional opera em torno do relógio, oferecendo apoio constante aos clientes globalmente, independentemente do fuso horário. Além disso, sua capacidade de traduzir e responder em vários idiomas amplia seu alcance global, quebra as barreiras linguísticas e amplia a base do cliente.

Melhor ainda, a IA faz tudo isto mantendo respostas de alta qualidade a uma escala muito maior. Pode lidar com centenas de conversas em simultâneo, de forma mais eficiente e com custos reduzidos.

Apoiando essa narrativa, um estudo revela que metade dos 300 líderes de centros de contato e TI entrevistados relatou que a inteligência artificial conversacional ajudou a diminuir os custos operacionais.

À medida que a IA gerencia até 87% das interações rotineiras do cliente automaticamente, reduz significativamente a necessidade de intervenção humana mantendo ao mesmo tempo a qualidade em paralelo com as interacções humanas. Esta eficiência levou a um aumento da produtividade dos agentes e a uma resolução mais rápida dos problemas dos clientes.

É evidente que esta tendência transcende a redução de custos. Ele aumenta significativamente a eficiência na gestão de altos volumes de conversas e ajuda os agentes a gerenciarem conversas de alto valor de forma eficaz.

Além disso, combinar IA e agentes humanos garante que as interações dos clientes sejam empatéticas e personalizadas. Como os clientes recebem respostas rápidas e precisas que atendam às suas necessidades, as empresas podem melhorar a satisfação do cliente e impulsionar as taxas de conversão.

Isto, por sua vez, proporciona às empresas uma vantagem competitiva, fomentando o crescimento e ultrapassando os seus concorrentes. Tendo discutido os benefícios, vamos explorar desafios e limitações.

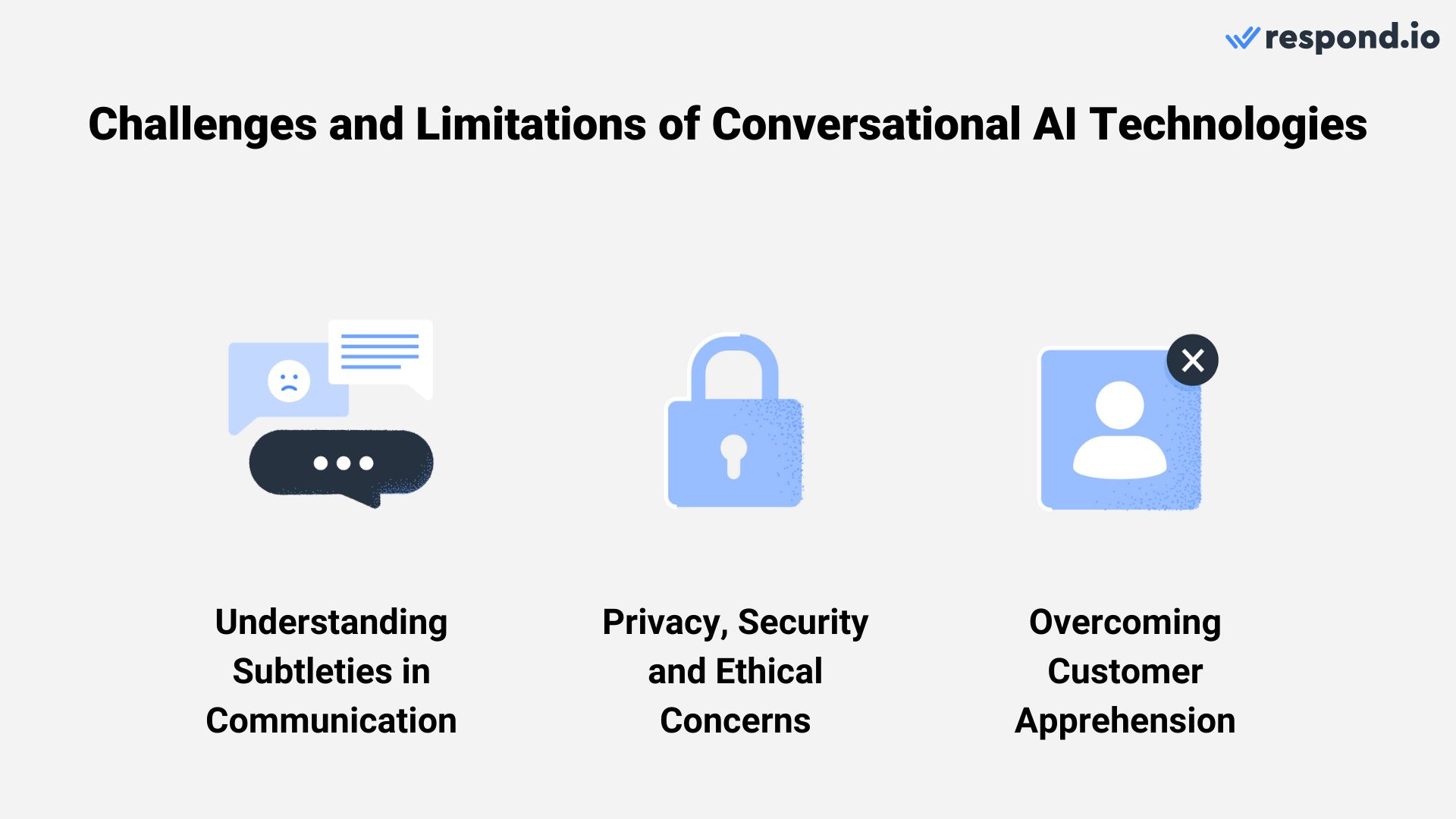

Desafios e Limitações da IA Conversacional

Incorporar uma IA conversacional em interações com o cliente apresenta vários desafios, apesar do seu potencial para simplificar a comunicação.

Uma limitação significante é a dificuldade da IA em entender nuances da comunicação humana como sarcasmo, contexto cultural e tom emocional. Isto torna-se particularmente evidente em situações que exigem uma elevada inteligência emocional, em que a supervisão humana é indispensável.

Além disso, confiar em conjuntos de dados extensivos levanta preocupações de privacidade e segurança do cliente. Cumprir regulamentos como o GDPR e o CCPA é essencial, mas também é importante atender às expectativas dos clientes em relação ao uso ético dos dados. As empresas têm de garantir que as tecnologias de IET são juridicamente compatíveis, transparentes e imparciais para manter a confiança.

A apreensão do cliente também apresenta um desafio, muitas vezes de preocupações sobre a privacidade dos dados e a capacidade da AIs de responder a consultas complexas. Mitigar isto requer uma comunicação transparente sobre as capacidades da IA e medidas robustas de privacidade dos dados para tranquilizar os clientes.

Agora que você tem todas as informações essenciais sobre IA conversacional, é hora de abordar como implementá-la em conversas com os clientes e as melhores práticas para sua utilização eficaz.

Transforme conversas de clientes em crescimento de negócios com respond.io. ✨

Gerencie chamadas, chats e e-mails em um só lugar!

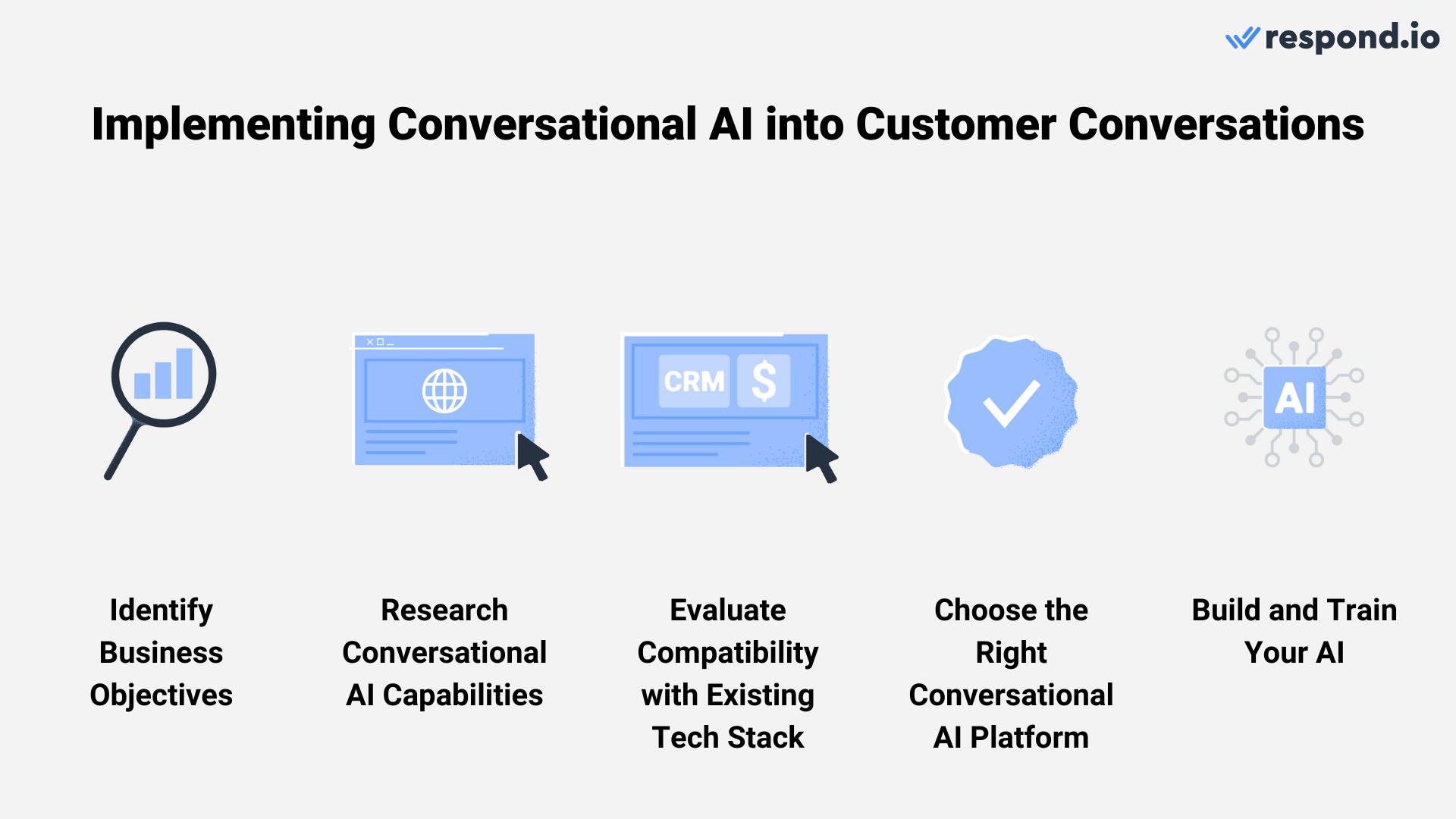

Como usar AI Conversacional para Conversas de Clientes

A integração da IA conversacional nas interações com o cliente vai além de escolher uma plataforma apropriada — ela também envolve uma série de outras etapas essenciais.

A viagem envolve cinco etapas principais:

Identificar objetivos de negócios

Pesquise os recursos que você precisa

Assegure a compatibilidade com a sua pilha tecnológica existente

Escolha a plataforma certa de IA

Treine IA para alinhar com seus objetivos

Vamos explorar como navegar por essas etapas para uma implementação bem-sucedida da IA conversacional.

Identifique seus objetivos

Comece definindo claramente os objetivos específicos de negócios que você visa atingir com I.I. Conversacional. Identifique áreas onde pode agregar mais valor, seja em marketing, vendas ou suporte ao cliente.

Por exemplo, seus objetivos podem incluir o gerenciamento de volumes altos de conversas automaticamente, melhorando a interação do cliente, resolução eficiente de casos, personalização de viagens de compra, entrega precisa de informações e muito mais.

Seus objetivos servirão como um roteiro para selecionar as ferramentas de IA certas e adaptá-las às suas necessidades específicas. Com seus objetivos claramente definidos, o próximo passo é pesquisar as capacidades específicas que sua plataforma I.A. Conversacional precisa possuir.

Pesquise e identifique as capacidades que você precisa

Com base em seus objetivos, considere se os chatbots convencionais são suficientes ou se o seu negócio requer capacidades avançadas de Inteligência Artificial. Observe que alguns provedores podem rotular os chatbots tradicionais como "AI" apesar de não terem tecnologias como NLP e ML.

Algumas capacidades que a inteligência artificial conversacional traz incluem adaptar interações com dados dos clientes, analisar compras passadas para recomendações, entender gravações de voz, acessar suas bases de conhecimento para respostas precisas e mais.

Uma vez que você entenda claramente as funcionalidades que precisa, um fator crucial a considerar antes de escolher uma plataforma de IA conversacional é a compatibilidade com a sua pilha de software atual.

Avalie a compatibilidade com a Pilha de Tecnologia Existente

Ao considerar uma plataforma de inteligência artificial conversacional, assegure-se de que ela possa se integrar perfeitamente ao seu software existente, como seu CRM ou plataformas de e-commerce. Isto garante um fluxo de trabalho suave e impede problemas de integração.

Assim que você entender claramente suas necessidades e como elas se encaixam com seus sistemas atuais, o próximo passo é selecionar a melhor plataforma para a sua empresa.

Escolha a plataforma certa de inteligência artificial conversacional.

Selecionar a plataforma de inteligência artificial conversacional correta é crítico, pois seu negócio dependerá muito disso para gerenciar as conversas com os clientes. Se o seu negócio estiver a crescer rapidamente, procure uma solução escalável e adaptável às necessidades futuras e aos avanços tecnológicos.

A plataforma certa deve oferecer todos os recursos que você precisa, facilidade de integração, suporte robusto para volumes altos de conversa e flexibilidade para evoluir com seu negócio.

O mais importante, a plataforma deve aderir às regulamentações globais de proteção de dados, como GDPR e CCPA, garantindo robustez na privacidade e segurança dos dados. Com a plataforma certa escolhida, o próximo passo é focar no treinamento da sua IA.

Treine AI para Alinhar com Seus Objetivos de Negócio

Dependendo da plataforma escolhida, você pode treinar seu Agente de IA para refletir a eficiência dos seus melhores agentes humanos. Você pode integrar a IA aos fluxos de trabalho atuais, permitindo que ela atue como uma resposta inicial para lidar com perguntas rotineiras via chat e chamadas, e direcionar conversas mais complexas ou sensíveis para agentes humanos.

Além disso, treinar sua AI para fornecer respostas precisas é crucial. Isso envolve fornecer informações atualizadas a partir de recursos existentes, como seus artigos de base de conhecimento ou FAQs. Isso garante que a IA permaneça relevante e eficaz no tratamento de questionamentos aos clientes, finalmente ajudando você a atingir seus objetivos de negócios.

Agora que você sabe o que precisa para implementar a IA conversacional nas conversas com os clientes, vamos ver algumas das melhores práticas.

Melhores práticas para o uso de IA conversacional

Incorporar uma IA conversacional à sua estratégia de atendimento ao cliente pode aumentar significativamente a eficiência e a satisfação do cliente.

No entanto, para obter o máximo proveito desta tecnologia, é importante seguir certas melhores práticas.

Alinhe a personalidade da IA com a tonalidade da sua marca.

Ao alinhar a personalidade da IA com o tom da sua marca, você melhora a experiência do cliente, tornando as conversas mais pessoais e próximas. Esta abordagem não só reforça a identidade da sua marca, como também promove uma ligação mais forte com o público.

Tendo correspondido a personalidade da IA ao tom da sua marca, vamos explorar agora outra melhor prática crucial: avaliação contínua e educação.

Avalie e Eduque sua IA continuamente

A eficácia da IA conversacional depende da sua capacidade de aprender e se adaptar. Avalie continuamente seu desempenho para garantir que atinja seus objetivos e mantenha-o atualizado com novas informações.

Atualizações regulares de seus conhecimentos, garantem que a IA permaneça relevante e eficaz no tratamento de diversas interações com os clientes. Este processo contínuo de avaliação e educação é crítico, mas também é importante reconhecer situações em que a intervenção humana é mais apropriada.

Saiba quando escalar para agentes humanos

Apesar da sofisticação da AI, certas questões complexas ou sensíveis podem exigir uma intervenção humana. Incorpore um caminho de escalonamento sem costura para agentes humanos em tais cenários, garantindo que a transição seja suave e que os agentes tenham rápido acesso ao contexto da interação.

Isto conduz à próxima melhor prática - formar agentes humanos para alavancar as ferramentas de IA.

Treinar agentes humanos para alavancar a IA

Com base nos recursos da plataforma selecionada, você pode fornecer aos agentes ferramentas sofisticadas de IA para melhorar suas interações com os clientes. Vamos tomar o respond.io como exemplo.

Os prompts do Respond AI podem ajudar os agentes a refinarem suas mensagens, garantindo clareza e precisão na comunicação. Também podem traduzir mensagens para diferentes línguas, reduzindo potenciais barreiras linguísticas.

Além disso, ferramentas como AI Assist podem ser um divisor de águas para fornecer acesso rápido dos agentes às informações relevantes. Esse acesso rápido à informação permite que os agentes respondam de forma rápida e precisa às perguntas dos clientes, melhorando os tempos de resposta e contribuindo para uma experiência do cliente mais satisfatória.

Takeaways chave

A IA conversacional está na linha da frente de uma nova era no envolvimento dos clientes, oferecendo uma mudança revolucionária dos métodos tradicionais de comunicação.

Conforme exploramos neste guia, a integração de tecnologias avançadas de IA conversacional capacita as empresas a realizar interações com os clientes de maneira mais dinâmica, intuitiva e personalizada. Ao contrário dos chatbots convencionais, eles oferecem uma profundidade de compreensão e adaptabilidade, permitindo conversas que realmente ressoam com os clientes.

Embora essa tecnologia transformadora não esteja isenta de seus próprios desafios, a trajetória da inteligência artificial conversacional é inegavelmente ascendente, evoluindo continuamente para superar essas limitações.

A IA está finalmente na fase em que as empresas podem manter a qualidade dos serviços em uma escala significativamente maior e com custos reduzidos. Portanto, as empresas que adotarem isso primeiro terão uma enorme vantagem sobre seus concorrentes,” disse Gerardo Salandra.

Em um mundo onde as expectativas dos clientes constantemente escalavam, manter os métodos tradicionais poderia atrasar o negócio. A IA conversacional não é apenas uma ferramenta para o presente, mas um investimento para um futuro onde interações inteligentes e empáticas com os clientes são a norma.

Transforme conversas de clientes em crescimento de negócios com respond.io. ✨

Gerencie chamadas, chats e e-mails em um só lugar!

Perguntas frequentes e resolução de problemas

O que é a IA conversacional?

I.A. Conversacional é um tipo de inteligência artificial (AI) que pode simular uma conversa humana. Isso é possível graças ao processamento de linguagem natural (PLN), um campo da IA que permite que os computadores entendam e processem a linguagem humana e aos modelos de base do Google que potencializam novas capacidades de IA generativa.

Como planear uma estratégia de IA conversacional?

Comece definindo objetivos claros e públicos-alvo, em seguida, escolha a tecnologia e as plataformas certas alinhadas com seus objetivos. Em seguida, use fluxos de diálogo envolventes e sensíveis ao contexto, e teste e refine continuamente com base no feedback do usuário e nos dados de interação.

O que devo procurar num software de IA conversacional?

Procure pela capacidade de se integrar com seus sistemas existentes. Também é crucial considerar a experiência do usuário, opções de personalização e a escalabilidade do software para se adaptar às crescentes necessidades de negócios.

Precisa de orientação para selecionar a melhor plataforma de IA conversacional para o seu negócio? Nosso artigo detalhado fornecerá as percepções necessárias. E se você estiver pronto para começar a melhorar as conversas com os clientes com IA, comece sua jornada com respond.io, um software de gerenciamento de conversas com clientes potenciado por IA – experimente grátis hoje!

Posso usar a IA conversacional para fazer follow-up com clientes?

Se as conversas ficarem pendentes aguardando a resposta do cliente, você pode usar um Agente de IA para lembrar com uma pergunta relevante. Por exemplo, o Agente de IA do respond.io vai além de simples lembretes baseados em tempo ou gatilhos de fluxo de trabalho. Ele usa o histórico de conversas para entender seu contexto e determina quando e como seguir com o contato. Esse entendimento ajuda a evitar contatar clientes cujos problemas já estão resolvidos, e a enviar lembretes personalizados, semelhantes aos humanos, no momento certo.

Leitura adicional.

Se você quiser aprender mais sobre inteligência artificial conversacional para conversas com clientes, aqui estão alguns artigos que podem te interessar.

Eletrônicos

Eletrônicos Moda & Vestuário

Moda & Vestuário Móveis

Móveis Joias e Relógios

Joias e Relógios

Atividades Extracurriculares

Atividades Extracurriculares Esportes e Fitness

Esportes e Fitness

Centro de Beleza

Centro de Beleza Clínica Dental

Clínica Dental Clínica Médica

Clínica Médica

Serviços de Limpeza Residencial e Empregada

Serviços de Limpeza Residencial e Empregada Fotografia e Videografia

Fotografia e Videografia

Concessionária de Carros

Concessionária de Carros

Agência de Viagens e Operadora de Tour

Agência de Viagens e Operadora de Tour